Officials Are Human Too

Every match win involves some luck. Whether it’s the coin toss or the bounce off a clipped net, some events in a tennis match are outside of a player’s control. And the sport accepts that. But we shouldn’t accept it when it comes to line calls, especially when there is technology that can prevent line call errors.

It is hard to find a better recent example of the frustration of a bad call than the overrule in the semifinal of the Monte Carlo Masters two weeks ago. The call happened in David Goffin and Rafael Nadal’s match and the result lost Goffin the 4-2 position in the first set that he had earned.

Clay courts don’t have player challenge systems because the visible marks the ball makes with the dirt are thought to be good enough. But the flaws of that system were exposed when the official of the semifinal overruled an “out” call on a long backhand hit by Rafael Nadal. The official checked his decision by coming down from his chair to look at the mark, but he looked at the wrong place and called the ball ‘in’. Video replay made it clear that the ball was well out, though nothing could be done at that point.

Goffin, who has yet to win a Masters title, seemed to feel that fate was against him after that mistake. He couldn’t get his head back in the match from that point on.

The existence of video review and other decision aids in sport are a recognition that even the most well-trained and determined officials can make mistakes. Making calls in elite sport puts a lot of pressure on human attention and perception, and no one is infallible.

So how can we reduce preventable errors on calls?

The introduction of the challenge system, which began it’s rollout in 2006, was a big step toward improving line calls. Working with colleagues at Tennis Australia and with Jeff Sackmann of the Tennis Abstract, we recently completed a study of over 1,000 challenges in professional matches. We found that players win more than 1 out of every 3 challenges. That suggests an error rate as high as 30% on the small subset of close calls that occur in a match.

However, we found that challenges were also underutilized. Challenges are made only 3 of every 100 points for men and even less for women. Men and women play an average of 60 points per set at the Grand Slams, so you could expect most sets will have 1 to 2 challenges at most. Players could afford to use more of their challenges especially in later stages of matches where points are more important.

The current challenge system is a step forward, but the system should be made available at all tour-level events, even on clay.

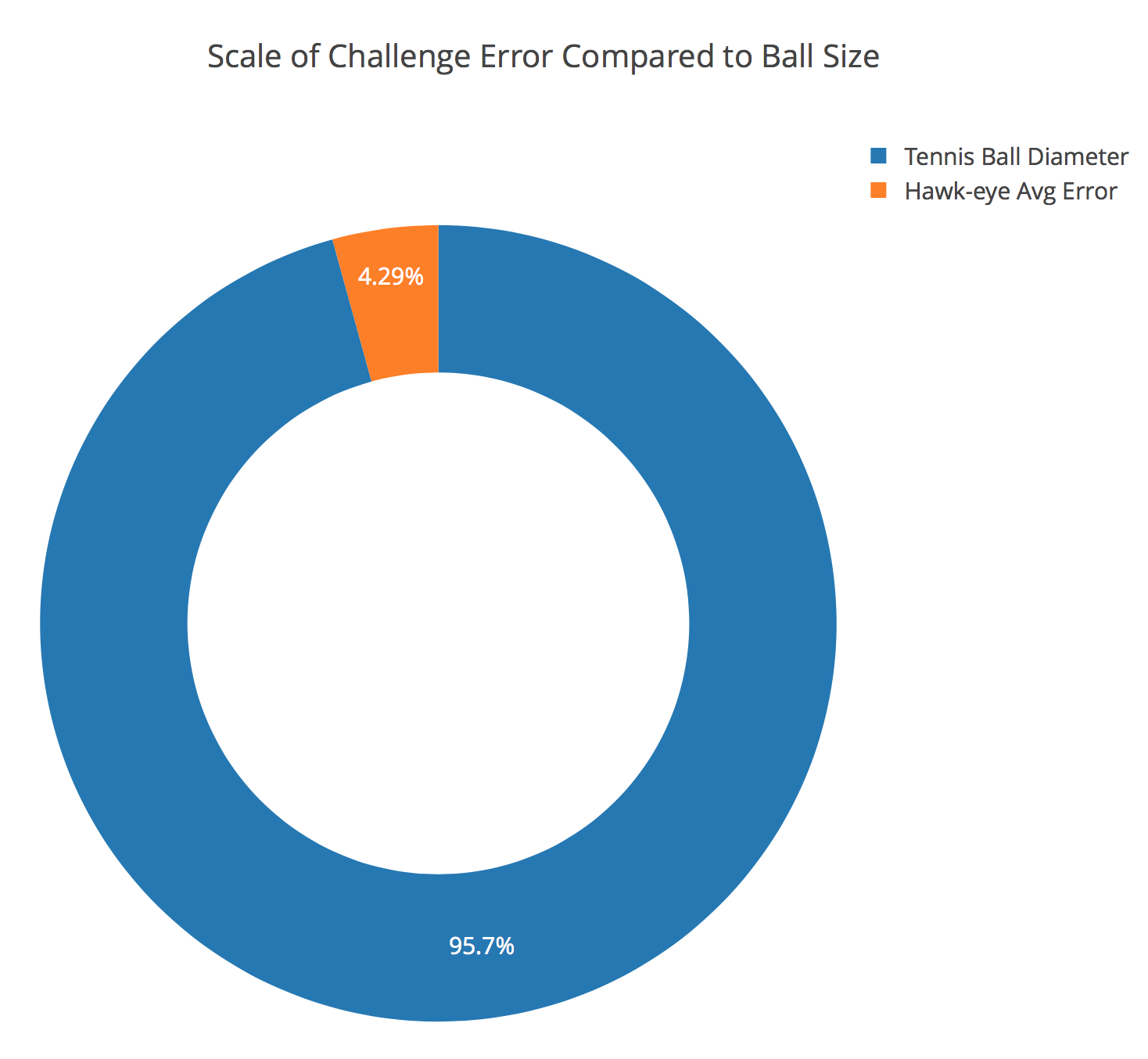

The current system, although less error prone than human judgment alone, is still fallible. The ball locations you see on the challenge replay are a projection based on a multi-camera system provided by Hawk-eye Technologies (now owned by Sony). That projection is an estimate, it is not the “truth”. Of course, the company has worked determinedly to get the margin of error of their system down. Reports suggest that the tracking system has an average margin of error of 3 mm. The regulation diameter of a tennis ball, according to the ITF is 67 mm, so that error is equivalent to 4% the width of a tennis ball.

That uncertainty would seem sufficiently small but remember it is an average so the error for any particular bounce could be something larger or smaller, which the system sadly never reports during a broadcast.

We might ask why introduce a system, whose mere presence annoys some of the sports biggest stars, if it doesn’t even provide 100% accuracy?

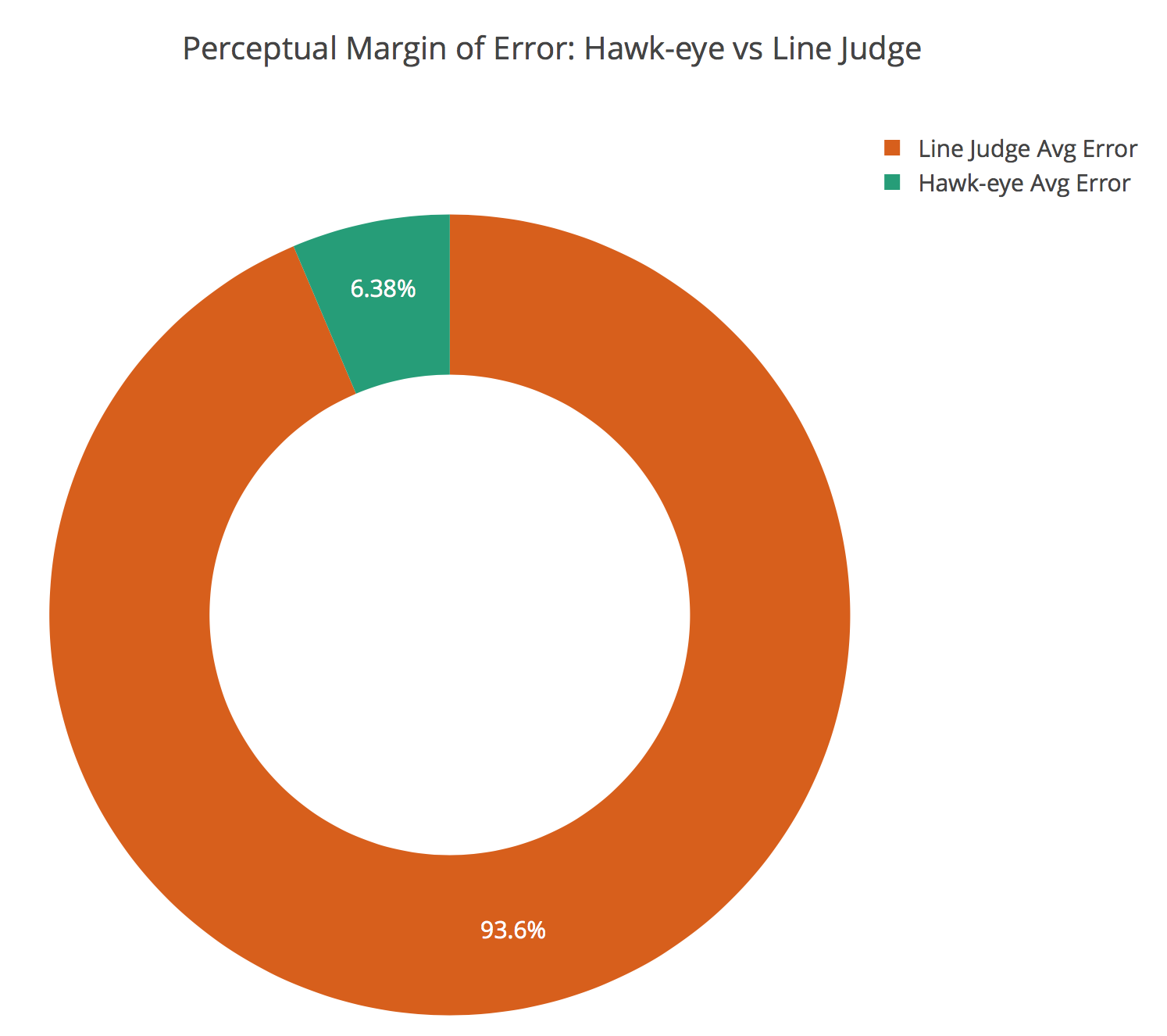

If we want to avoid unlucky line calls, we might still accept a system that was a massive improvement over human judgment, even if still imperfect. Research into that question suggests the current challenge system does meet that standard. In a 2008 study in the The Proceedings of the Royal Society, Series B, George Mather investigated the perceptual uncertainty of tennis line judges and found that it was 40 mm. Excellent for human performance but still magnitudes larger than tracking system performance.

So, even with the inherent flaws with estimation, the challenge system is a useful aid to have in the game. However, there is a less recognized problem that the introduction of the system has created: official bias.

The specific bias I am referring to is a side effect of challenged ‘out’ calls that are successfully overturned by the challenge system. When a call is found to have been rightfully ‘in’, the chair umpire has to decide whether the point is awarded to the player who made the shot or is replayed. The decision to award the point is equivalent to the chair concluding that the shot couldn’t be returned. These situations make up roughly 10% of all challenges.

As part of our study of challenges, we compared the official decisions on overturned ‘out’ calls to independent codes of a group of coaches from the National Academies of Tennis Australia. We found that the agreement between the expert judgment of a playable shot and the chair umpire’s judgment was around 75%. We also found that more of the disagreement was due to conservative awarding of points, that is, the chair said shots could be played more often than experts.

This highlights an example of impact bias. This bias arises when officials are averse to decisions that would have (or at least have the perception of having) a greater influence on the outcome of the game, like calling a strike when a batter already has two strikes against him. For tennis chairs, awarding a point is likely to seem the greater impact decision than replaying the point, even though they are making an influential decision in each case.

Sadly, this impact bias is much harder to detect than a bad call but more likely to occur than the kind of mistake we saw in the Monte Carlo semifinal. But there are ways the system could be remedied. I would propose an independent review, like what is used in the MLB, that would be done for every overturned ‘out’ call. Because this review would be anonymous and the decision-makers distant from the actual match, impact bias would be less likely. It is just a matter of making sure the review would be speedy enough to be acceptable to fans and players.